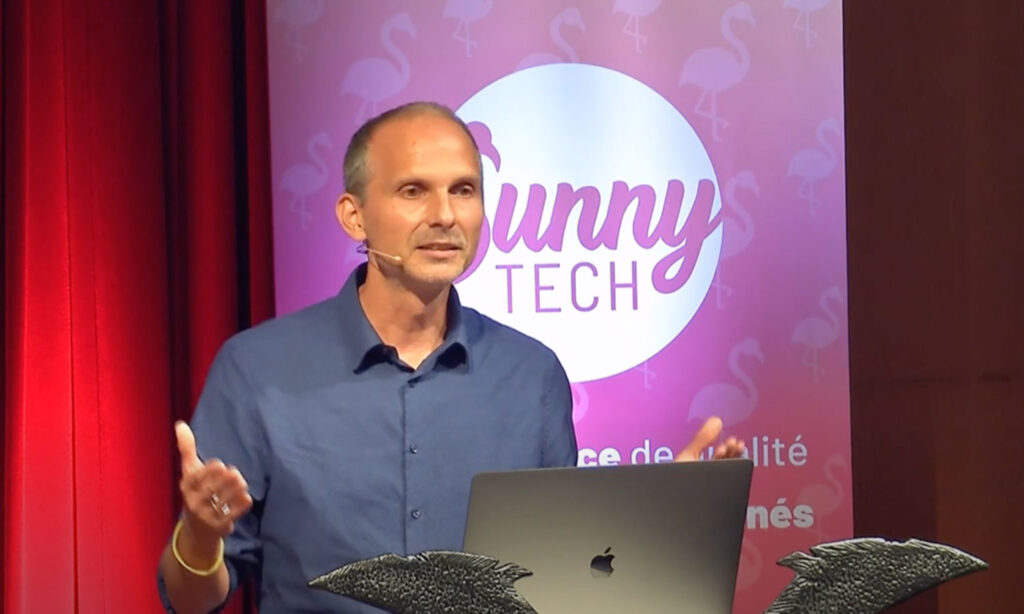

At Teads we are dealing with the processing of several billions of analytics events per hour. Those events are firstly ingested using a streaming pipeline and then prepared and loaded into our data warehouse where they are stored and aggregated in order to create meaningful costumer reports and apply helpful business intelligence.

A very important step during the ingestion is the data extraction from the streams (Kafka topics) into our log tables of the data warehouse (BigQuery). Here, we have to find the right balance between ingestion speed and transaction costs while maintaining our high requirements on data security. In this talk I would like show the development of our batched based approach of the last three years, from using a managed service like Dataflow inside a VPN over introducing kConnect towards applying our own framework based on Apache Spark.